Synopsis

Introduction

Digital banking automates traditional banking via digital platforms, enabling 24/7 access to accounts, transfers, payments, and loans from mobiles or computers. Customers manage everything online without paperwork or visits, often with features like real-time notifications and biometric security. It contrasts with branch-based banking by prioritizing speed and convenience.

Open banking uses APIs to share customer data securely between banks and third parties, fostering innovation. Embedded banking services, such as payments or loans, seamlessly integrate into apps from unrelated businesses, such as ride-sharing or e-commerce platforms. Customers complete transactions without leaving the host app, powered by Banking-as-a-Service (BaaS) providers.

In the race to deliver seamless, personalized, and app-like experiences, financial institutions have opened their once tightly controlled ecosystems to a web of third-party platforms, APIs, and digital channels. While this transformation has unlocked new revenue streams and customer engagement models, it has also introduced new risk vectors, from data leakage and fraud to regulatory non-compliance and reputational damage.

If you work in a risk function, you live this paradox every day. Customers want banking that feels effortless and intuitive, while regulators expect bulletproof controls, explainability, and zero tolerance for data misuse. The good news is AI can help you deliver both if you embed it into products, partnerships, and operations with risk up front, not bolted on later.

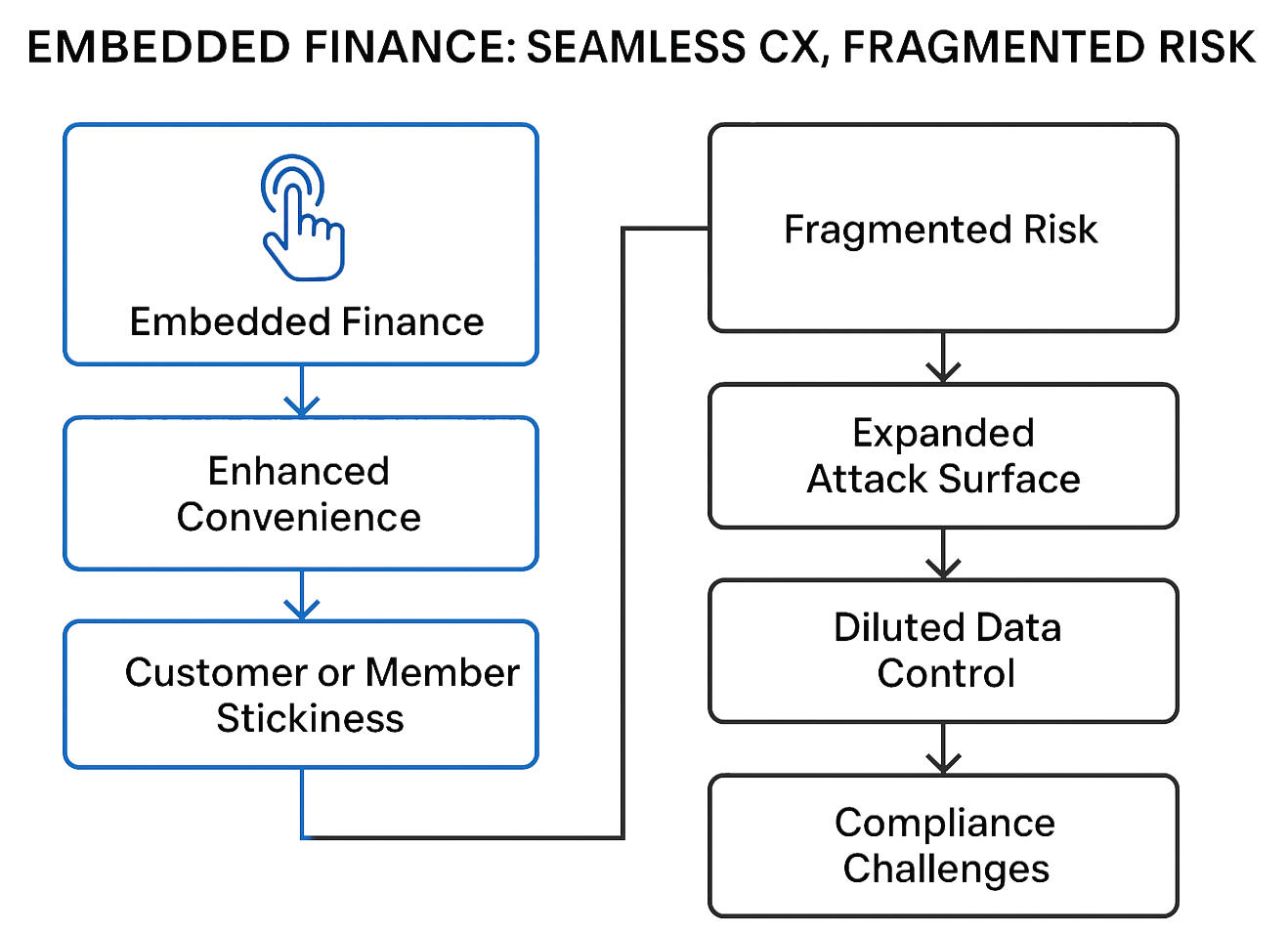

1. Embedded Finance: Seamless CX, Fragmented Risk

The Apple Card partnership illustrates the challenge: Goldman Sachs ultimately agreed to transition the program to Chase after losses and servicing friction, including calendarbased billing that created peak volumes for dispute handling; the CFPB also ordered compensation for customer service breakdowns in 2024.

What works: Apply AI behavioral analytics at the edge (where payments are initiated) to spot anomalies quickly, and use progressive underwriting so risk is assessed with every transaction, not just at origination. JPMorgan describes this “re-underwrite every increment” approach in its WePay integration, improving risk visibility at the SMB scale.

2. Open Banking: Unlocking Innovation, Unleashing Risk

Account aggregation and consented data sharing unlock better personal finance, lending, and cash management. But every new API is a new handshake with entities you don’t fully control. Research shows anomaly detection models can flag suspicious access behavior across open banking APIs, and industry analysts warn about credential abuse, scraping, and misconfigurations that expand your attack surface.

Plaid provides open banking solutions through fast, reliable, and secure API-based access. It enables users to connect their financial institution accounts to apps like Venmo and Robinhood. While this improves customer convenience, it also introduces data privacy risks and regulatory scrutiny. Financial institutions must ensure that third-party access is secure, auditable, and compliant.

What works: Monitor API traffic with AI in real time, automate consent lifecycle (granular scopes, expiry, revocation), and score thirdparty risk continuously. Treat API management as digital trust governance, not just routing and performance.

3. Fintech Partnerships: Agility vs. Accountability

4. Crypto, Stablecoins & Tokenization: Build Controls Where Customers Transact

Stablecoins are maturing, too. PayPal’s PYUSD launched in 2023 with 1:1 reserves and NYDFS-regulated issuance via Paxos; in 2025, PayPal disclosed the SEC closed its PYUSD inquiry without enforcement, reducing regulatory overhang for payment-use stablecoins.

What works: Enforce transaction-level AI for AML/fraud (including smart-contract scanning where relevant), maintain risk-adjusted product limits, and require reserve attestation + redemption SLAs for any stablecoin capability.

5. Tokenization: Your Best Defense (and a Revenue Enabler)

At the network scale, Visa reports 10+ billion tokens issued, $650M fraud savings in the past year, and improved approval rates, evidence that tokenization is now both a security and growth lever.

What works: Push network tokens across all channels (including wallets), keep token lifecycle under AI-assisted governance, and use updater services to reduce false declines and failed recurring payments.

6. The App-Like Experience: A Double-Edged Sword

Customers expect 24/7 help, proactive insights, and micro-personalization, delivered safely. Two useful blueprints:

- Wells Fargo – Fargo: handled 245M+ interactions in 2024 with a privacy-first orchestration that scrubs/tokenizes requests before model calls, no sensitive data passed to the LLM, while still delivering intent detection and actioning via internal APIs.

- Bank of America – Erica: surpassed 2–3B lifetime interactions, combining proactive insights with fast self-service while reducing call center load; proof that AI service can scale with strong governance.

What works: Keep PII outside model contexts, enforce human-in-the-loop for edge cases, and log explainability artifacts for audit.

7. AI Governance: Regulators Want Explainability, Controls, and Third-Party Oversight

AI is expanding quickly across underwriting, fraud, servicing, marketing, and collections. And with that expansion comes increased model-risk exposure, fair-lending scrutiny, and heightened third-party governance responsibilities, especially since so many models and datasets now originate from external providers. Regulators are paying close attention to how financial institutions validate models, mitigate bias, protect consumer data, and ensure that outsourced AI doesn’t create unmanaged risks.

What works: A practical approach looks like this:

- Map every AI system to existing risk frameworks – operational risk, model risk (SR 11-7 style), compliance, privacy, and UDAAP.

- Strengthen vendor governance by requiring AI-specific attestations from suppliers (data sources, model lineage, training methods, testing procedures, fairness controls, cybersecurity posture).

- Maintain audit-ready documentation, including data lineage, model cards, testing protocols, performance reporting, human-oversight checkpoints, and intervention logs.

What to Do Next: A Practical Checklist

- Embed risk early in the customer experience

Instrument onboarding flows, payments, and service interactions with real-time anomaly detection and decision-intervention points. This helps catch fraud, identity issues, and AI-driven decision errors before they impact customers.

- Harden API ecosystems

Treat APIs as a regulated trust boundary. Use AI-enabled traffic monitoring, consent-management controls, and strict authentication to manage risks associated with data sharing, open-banking connectivity, and fintech integrations.

- Treat tokenization as a default security layer

Whether it’s wallets, e-commerce flows, or card-on-file, tokenization helps reduce fraud and improve authorization quality. Use AI monitoring to assess token health, suspicious patterns, and credential-based attacks.

- Operationalize model-risk management for AI

Create explainability documentation, fairness/bias testing routines, and policies for human-in-the-loop oversight. Make sure third‑party models, LLMs, scoring engines, fraud tools, and biometric solutions are included in your MRM inventory and validated with the same rigor as internal models.

- Monitor reimbursement and liability rules that affect financial institutions

The regulatory landscape is evolving through CFPB guidance, Reg E interpretations, and state-level data and liability rules. Strengthening payee verification, confirmation signals, and inbound-payment controls helps prepare for potential future changes in reimbursement expectations.

Bottom Line

Author

Paresh Ashara

Paresh is a Vice-President at Quinte Financial Technologies, managing Data Analytics, AI & Automation solutions & services. He has over 26 years of IT services and product engineering experience in the BFSI vertical. He is passionate about data and advanced analytics and has dabbled in creating solutions leveraging Generative AI and Agentic AI technologies.

Source: This article was originally published in “Intelligent Risk by PRMIA” on March, 2026.

References:

- Embedded Finance: A Strategic Roadmap for Banks https://mobeyforum.org/mobey-forum-unveils-new-report-embedded-finance-a-strategic-roadmap-for-banks/

- The Risks of AI in Banking https://www.ncontracts.com/nsight-blog/risks-of-ai-in-banking

- Apple Card – Apple https://www.apple.com/apple-card/

- Integrating WePay Into Our Modern Commerce Platform | J.P. Morgan https://www.jpmorgan.com/insights/payments/merchant-services/wepay-integration

- Dynamic Micro-Personalization in Banking: Case Studies https://superagi.com/dynamic-micro-personalization-in-banking-case-studies-on-ai-driven-customer-experiences/

- Erica – Virtual Financial Assistant https://info.bankofamerica.com/en/digital-banking/erica

- Risk Management in Open Banking: Beyond Fraud Detection | Vellis https://www.vellis.financial/blog/vellis-news/open-banking-risk-management

- Revolut prevents $13.5M of ‘potential fraud transactions’ in crypto - https://cointelegraph.com/news/revolut-prevents-14-million-fraud-crypto-3-months

- Payment token format reference | Apple Developer Documentation https://developer.apple.com/documentation/passkit/payment-token-format-reference

- Visa Issues 10 Billionth Token, Generating $40 Billion in Incremental E-commerce Globally | Visa https://usa.visa.com/about-visa/newsroom/press-releases.releaseId.20701.html

- Why tokens are key to future proofing your payments | Visa Acceptance Solutions https://www.visaacceptance.com/en-us/blog/article/2025/tokens-are-key-to-future-proofing-payments.html

- Wells Fargo’s AI assistant just crossed 245 million interactions – no human handoffs, no sensitive data exposed | VentureBeat https://venturebeat.com/ai/wells-fargos-ai-assistant-just-crossed-245-million-interactions-with-zero-humans-in-the-loop-and-zero-pii-to-the-llm

- BofA’s Erica Surpasses 2 Billion Interactions, Helping 42 Million Clients Since Launch | Press Releases | Newsroom | Bank of America https://newsroom.bankofamerica.com/content/newsroom/press-releases/2024/04/bofa-s-erica-surpasses-2-billion-interactions--helping-42-millio.html

- A Decade of AI Innovation: BofA’s Virtual Assistant Erica Surpasses 3 Billion Client Interactions | Press Releases | Newsroom | Bank of America https://newsroom.bankofamerica.com/content/newsroom/press-releases/2025/08/a-decade-of-ai-innovation--bofa-s-virtual-assistant-erica-surpas.html